Why Multi-Agent Systems Fail in Enterprise AI

Explore the complexities of multi-agent systems in enterprise AI projects. Learn why 40% of these initiatives fail within six months and how poor orchestration can amplify mistakes. Discover the projected growth of the autonomous AI

Part 4 of the AI Chief of Staff Series | 3XNL.ai

When One Agent Isn't Enough (And Why Adding More Is Harder Than It Sounds)

You built the agent. It works. Now you want more of them. Congratulations, you've just entered the part of the story where 40% of enterprise AI projects fail within six months.

You Built the Thing. It Works. Now You've Created a New Problem.

You did the architecture. The memory layer. The task system. The decision rules. The operating cadence. Your AI Chief of Staff is actually acting like a Chief of Staff.

And now you've noticed something inconvenient.

It can only do one thing at a time.

The research agent, the comms agent, and the financial reporting agent are all sitting in your head, taunting you. If one specialized agent is useful, why not a whole team of them? Let them work in parallel. Let them hand off to each other. Wake up to a fully coordinated AI operation running while you sleep.

That vision is real. It's already in production at the most technically advanced organizations in the world.

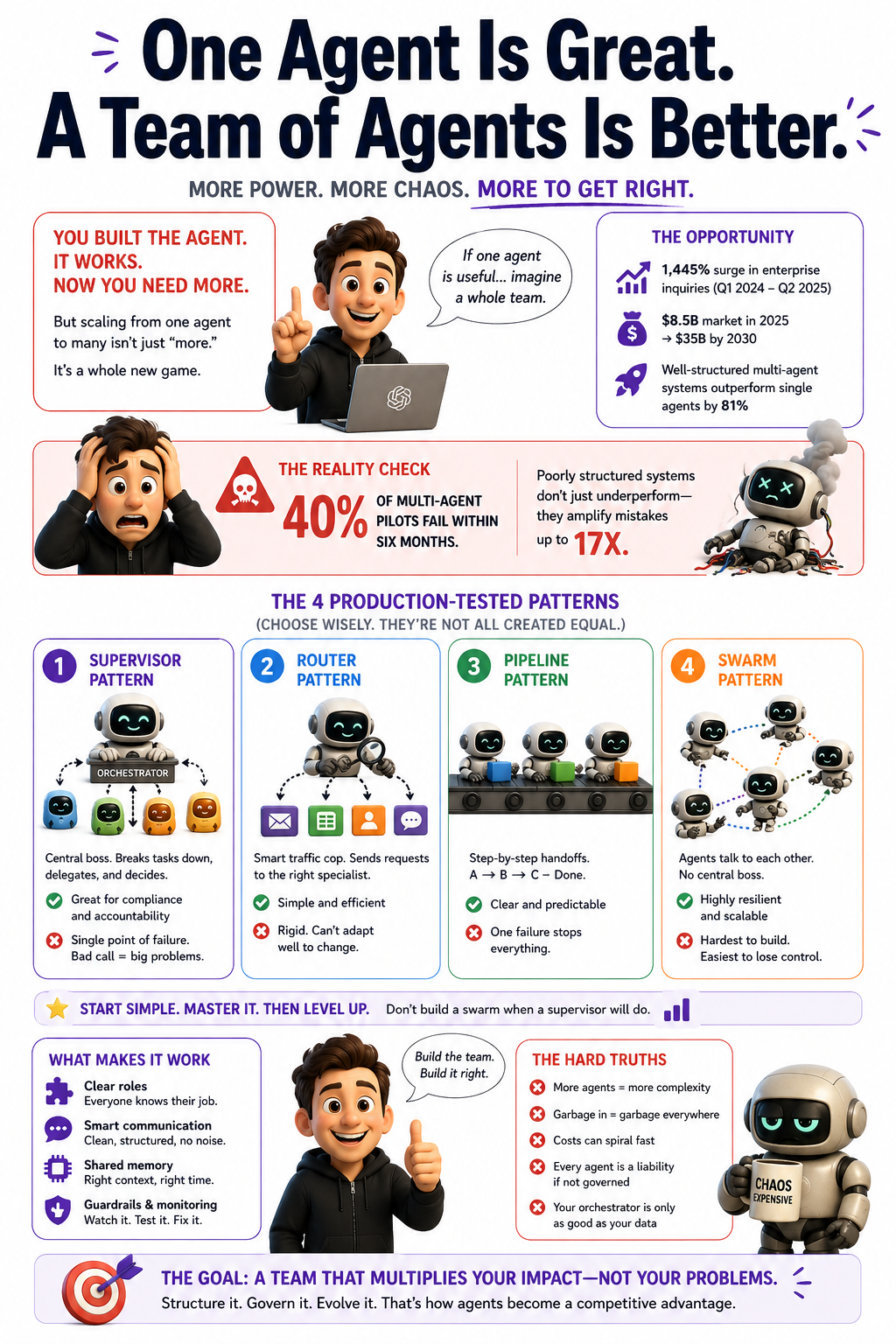

Gartner recorded a 1,445% surge in enterprise inquiries about multi-agent systems between Q1 2024 and Q2 2025. That's not a hype number. That's a "we have a budget meeting next Tuesday" number.

The autonomous AI agent market is projected to hit $8.5 billion this year and $35 billion by 2030.

Here's what comes with that upside: 40% of multi-agent pilots fail within 6 months of production deployment. Poorly structured multi-agent systems don't multiply your capabilities. They amplify your mistakes up to 17 times.

Do not reduce them. Amplify them. Seventeen times.

So yes, the vision is real. The path to it is not what most people think it is.

The path to it is, in fact, the part most people skip on their way to building three more agents.

What "Multi-Agent" Actually Means

(Because the Word Is Doing a Lot of Heavy Lifting Right Now)

"Multi-agent" gets used to mean everything from "two chatbots that can read each other's outputs" to "a fully autonomous AI network running enterprise operations at scale." Those are not the same thing.

Treating them as if they are leads to some very expensive misunderstandings. The kind you discover via invoice. Or via post-incident review. Or both.

The clearest definition is architectural: multi-agent orchestration is the coordinated management of multiple specialized AI agents operating as a unified system. The word that matters is coordinated.

Agents that happen to exist in the same environment aren't a multi-agent system. Agents that communicate, delegate, and share state in service of a common goal with a governing layer that tracks what each one is doing, that's orchestration.

The analogy that maps cleanly to this series: if a single AI agent is a skilled individual contributor, a multi-agent system is a team. And just like a human team, output quality depends less on individual capabilities and more on the coordination layer that owns what, how handoffs work, and what happens when something breaks.

A well-structured multi-agent system outperforms a single agent by 81% on complex tasks. The gain comes from two sources: parallelism (subagents work simultaneously rather than sequentially) and specialization (each subagent handles a focused, bounded objective rather than the full, sprawling request).

A poorly structured one amplifies whatever the weakest link gets wrong because every downstream agent treats upstream outputs as ground truth.

Which brings us to the part nobody puts in the demo.

The Four Patterns That Run Production Systems

(And the One You Should Build Last, No Matter How Cool It Sounds)

There's no single correct multi-agent architecture. There are four patterns that dominate in 2026, and choosing the wrong one doesn't just add complexity; it creates architectural debt that compounds with every new agent you add.

The Supervisor Pattern

A single orchestrator agent sits at the center. It receives the high-level goal, breaks it into subtasks, delegates to specialist subagents, collects results, and decides what to do next.

This is the most common pattern in compliance-heavy environments because it provides a clear chain of accountability. Something goes wrong, and something always goes wrong, and you know exactly which agent to interrogate.

The failure mode: the orchestrator is a single point of failure. If it misclassifies a task, the wrong agent gets it. At scale, those misclassifications compound. Financial services firms overwhelmingly favor this pattern for auditability, but they also need robust failover logic for the orchestrator itself.

Otherwise, you've built a very accountable system that is also occasionally a single point of complete collapse.

Which is, technically, a kind of accountability. Just not the helpful kind.

The Router Pattern

A lightweight classifier identifies the request type and dispatches it to the right specialist. Simple, stateless, easier to debug than the Supervisor.

The trade-off is rigidity. It can only handle request types it was designed for. It cannot adapt routing logic dynamically the way a true supervisor can. If your workflow evolves, and it will, the Router requires manual updates to keep pace.

This is fine until it isn't.

The Pipeline Pattern

Agents arranged in a sequence: Agent A processes input, hands output to Agent B, which hands output to Agent C. Extremely transparent. You can inspect every stage. You always know where you are.

Also extremely brittle. Any stage failure stops the entire chain. Works beautifully for well-defined, repeatable processes where the sequence never varies. Breaks spectacularly in ambiguous or dynamic environments.

Translation: excellent for assembly lines. Terrible for anything that looks like the real world.

The Swarm Pattern

Agents communicate directly with each other, self-organize, and adapt without a central controller. Highest fault tolerance when one agent goes down, others route around it. Highest scalability. Hardest to debug by an enormous margin.

If you cannot trace an execution through a system with linear handoffs, you absolutely cannot trace it through a swarm. You will know something went wrong. You will not know what, where, or which agent started it. You will have a very sophisticated system producing incorrect outputs that are completely structurally plausible.

You will also have a thread on Slack titled "What is this charge?" that nobody wants to claim.

This is the pattern the most advanced architectures are moving toward.

Build it last. After you've exhausted what the Supervisor can do. Not because it sounds impressive at the architecture review.

Here's the honest breakdown on all four:

- Supervisor of medium complexity, low fault tolerance (single point of failure), best for compliance and auditability, but breaks under high-volume orchestrator throughput.

- A router with low complexity and medium fault tolerance is best for structured request types and classification workflows, and falls apart with dynamic or ambiguous inputs.

- Pipeline has low complexity, low fault tolerance (chain failure), best for repeatable sequential processes, dangerous the moment any step involves uncertainty.

- Swarm has high complexity, very high fault tolerance, is built for large-scale systems, and is functionally impossible to run without distributed tracing. If you don't have distributed tracing, the Swarm will find new and creative ways to remind you of that.

Start with the Supervisor. Build the Swarm when you've run out of things to improve everywhere else.

(You will not run out. Build the Swarm anyway, eventually, but not first.)

The Two Protocols Running the Whole Stack

If you're building multi-agent systems in 2026, two protocols define the foundation. Understanding them isn't optional; it's the difference between interoperability and lock-in.

MCP Model Context Protocol. Handles how individual agents connect to tools, data sources, and APIs. Think of it as the USB standard for AI agents: if a tool speaks MCP, any MCP-compatible agent can use it without custom integration work.

Anthropic built the standard. It's been widely adopted; every major AI vendor now supports it, with over 10,000 public MCP servers available.

A2A Agent2Agent Protocol. Handles how agents talk to each other. Where MCP is agent-to-tool, A2A is agent-to-agent: discovery, delegation, task lifecycle tracking, and coordination across agent boundaries.

Google launched it as an open standard, explicitly designed to complement MCP rather than compete with it. An A2A-compatible agent broadcasts an "Agent Card" describing its capabilities. Other agents can discover it, delegate tasks to it, and track whether those tasks are completed.

The practical model for a production multi-agent stack:

- A planner agent receives the high-level goal

- It discovers specialist agents via A2A Agent Cards

- Each specialist uses MCP to access the tools, databases, and APIs it needs

- The planner tracks task state through A2A's built-in lifecycle management

- Humans remain in the loop for actions that cross defined approval thresholds

This is the pattern Anthropic's Claude Managed Agents platform is built on: multi-agent coordination in research, previewed as of April 2026, enabling coordinator agents to spawn specialist subagents, each running in its own isolated context thread.

One governance observation worth nailing to the wall: MCP and A2A establish interoperability. Neither establishes governance: who decides which agents can talk to which tools, under what conditions, and with what audit trail?

That layer is still yours to build. Nobody is coming to build it for you.

Nobody is even coming to remind you it exists.

Where the Tools Are Right Now

The three platforms from this series are all moving toward multi-agent capability. At different speeds. With different tradeoffs.

Claude Managed Agents launched multi-agent coordination in research preview on April 8, 2026. The architecture is a coordinator-subagent model: a coordinator delegates subtasks to specialist agents, each running in its own isolated session thread with its own context, tools, and MCP configuration. Threads are persistent; the coordinator can follow up with a subagent from a prior conversation, and that agent retains context from previous turns. Anthropic handles the infrastructure, including sandboxed execution, credential management, automatic error recovery, and session tracing via the Claude Console.

Current constraint: only one level of delegation. Subagents cannot spawn their own subagents yet.

This is the right constraint for now. It keeps the system auditable while the tooling matures. It is extremely difficult to debug a system you cannot fully trace.

Anthropic appears to understand this. Not everyone building on top of these platforms does.

OpenAI Agents SDK uses explicit, typed, clean agent-to-agent delegation. When Agent A completes its task, it transfers execution to Agent B via a structured handoff call rather than ambient context sharing. You always know which agent owns the task, what it received, and what it returned. OpenAI also launched Frontier in February 2026, an enterprise platform designed to give agents the same onboarding, context, permissions, and feedback loops that human employees get.

The clarity is the feature. The weakness of explicit handoffs: infinite handoff loops are the number-one failure mode. Agent A passes to B, B passes to C, C passes back to A, and because routing is non-deterministic, the same input can produce wildly different agent chains on different runs.

You'll know this is happening when you can't explain why the same request produced three different outcomes, and the token bill is behaving strangely.

By "behaving strangely," I mean "growing exponentially while nobody owns the dashboard."

OpenClaw uses sub-agent spawning isolated sessions with their own context, tools, and workspace. The ClawHub marketplace offers over 5,700 skills. The honest accounting from Part 3 holds here: OpenClaw is the most powerful chassis but the least managed. In a multi-agent configuration, all the reliability warnings amplify.

Silent failures, in which an agent confirms completion before the action actually occurs, become harder to detect when no central coordinator audits every output.

The verdict from practitioners who've used all three: most teams end up combining approaches. OpenClaw for the personal assistant layer. Claude Managed Agents for production workloads. OpenAI's SDK for specific pipeline orchestration. Because all three support MCP, tools and data can flow between them.

The stack isn't a competition. It's a menu. Order accordingly.

The New Failure Modes Nobody Warned You About

Single agents fail in understandable ways. Multi-agent systems fail in ways that are architecturally different, harder to trace, and more expensive when they finally surface.

Error amplification. In a single-agent system, a confident wrong answer costs you the time it takes to catch and correct it. In a multi-agent pipeline, that confident wrong answer gets passed downstream and treated as ground truth by every subsequent agent.

By the time the error surfaces as a visible problem, it has influenced three downstream decisions, populated four data fields, and sent two emails. The failure appeared in the first agent. The damage appeared three agents later.

You will spend significant time staring at the wrong agent. Convinced it's the problem. While the actual culprit, three steps upstream, watches calmly from its sandboxed thread.

The accountability vacuum. When a multi-agent system produces an incorrect output, a wrong loan decision, a miscommunicated customer resolution, or a flawed regulatory filing, the question of who owns that failure gets complicated very quickly.

Was it the model? The orchestration layer? The agent who retrieved the wrong data? The team that designed the pipeline? "The system decided" is not a defensible answer when a regulator asks.

The EU AI Act would like you to know that, in certain contexts, this ambiguity can result in a fine of up to 6% of your global annual revenue.

That's not a footnote. That's a quarterly earnings event.

Infinite loops and chain failures. Without a supervisor that detects when agents are cycling, multi-agent systems can loop indefinitely. Agent A asks Agent B. Agent B defers to Agent A. Nobody owns the task. Nobody notices. The loop runs.

In explicit handoff patterns, such as OpenAI's SDK, this is more detectable. In swarm architectures, it's nearly invisible until the token bill arrives.

At which point it is extremely visible.

Cost amplification. Agentic loops already cost 5–25x more per user than simple chat applications. Multi-agent systems multiply that further. The orchestrator makes multiple LLM calls for task decomposition and result aggregation, on top of every worker agent call.

A workflow that costs $0.50 in testing can hit $50,000 per month at 100,000 executions.

Read that one twice. From fifty cents to fifty thousand dollars. With no architectural change. Just scale.

The pattern is consistent enough to be called an industry norm: teams measure token consumption after launch, when the architecture is locked. By then, optimization requires rearchitecting, which costs far more than building cost controls on day one.

Build the cost model before you deploy. Not as a formality. As a survival mechanism.

The observability gap. In traditional software, a failure produces a log entry, an error code, and a stack trace. In a multi-agent system, a failure often produces a confident, well-formatted output that is simply wrong.

Without distributed tracing, the ability to follow a single request through every agent it touched, you cannot diagnose what happened. You just know the output was wrong.

Deploying a multi-agent system without observability infrastructure is the operational equivalent of running a financial reconciliation process with no audit trail and hoping for the best.

Some organizations do this. They stop doing it after the incident.

There is always an incident.

The Autonomy Spectrum: Where Human Judgment Lives

(And Why Only 4% of Teams Have Removed It Entirely)

One of the most important frames for multi-agent systems isn't technical. It's about the relationship between the system and human judgment and where that line sits.

A progressive autonomy spectrum has emerged as the practical governance model:

- At one end: humans in the loop approving every agent action.

- At the other: humans are out of the loop, continuous monitoring but no per-action approval.

- In the middle: humans on the loop agents operate autonomously, but humans receive alerts, review telemetry dashboards, and can intervene when the system flags something for escalation.

The data from production deployments: only 4% of teams currently allow agents to act without human approval.

That's not a failure of confidence in AI. That is mature deployment thinking. The other 96% have decided, after looking at what these systems actually do in production, that autonomous action without oversight is a flavor of risk they are not yet comfortable pricing.

The most successful teams are adopting graduated trust models, where low-risk actions are automated, higher-risk decisions require human oversight, and the threshold between them is explicitly designed before a single agent runs.

Deloitte predicts that 2026 will see the most advanced businesses shift toward human-on-the-loop orchestration, not full autonomy, but structured oversight that scales. Gartner projects that 33% of enterprise software applications will include agentic AI by 2028, up from less than 1% in 2024. Most of it will be operating in this middle ground. Not because enterprises are timid, but because the middle ground is where most failure modes get caught before they become incidents.

Define your autonomy posture before deployment. What runs without approval? What requires a flag but not a stop? What requires human sign-off before proceeding?

These are not questions you answer in the post-mortem.

They are the governance foundation you build before the first agent runs.

What This All Means

The progression through this series has been deliberate. We started with the persona illusion. We built through the architecture for reliable single agents. We traced three tools as complementary layers of the same stack. Multi-agent orchestration is where those layers start to interact and where the discipline required either compounds your results or compounds your mistakes.

What's actually changing in 2026 is not just capability. It's the organizational model for AI. McKinsey identified the shift in November 2025: from passive AI copilots to proactive actors that coordinate across the enterprise. When that happens, the bottleneck stops being model quality and starts being organizational readiness, clear ownership, modular workflows, observability infrastructure, and governance frameworks that can keep pace with deployment speed.

The organizations that get the most out of multi-agent systems aren't building the largest networks. They're the ones that mastered the organizational disciplines, decision rules, escalation logic, and accountability assignment that make individual agents reliable. And then carried those disciplines forward into the coordination layer.

Every failure mode in multi-agent systems traces back to a discipline that worked at the single-agent layer but wasn't carried forward.

By 2028, at least 15% of day-to-day work decisions will be made autonomously through AI agents. 40% of Global 2000 job roles will involve working with AI agents this year. The direction is not in question.

The question is whether you build the system thoughtfully enough to benefit from it or deploy fast enough to become one of the 40% of pilots that fail within six months.

Pick.

The Checklist Before You Add the Second Agent

This is the section that replaces the temptation to just start building.

Before deploying a multi-agent system, verify these conditions. Not most of them. All of them.

1. Your single-agent layer is reliable. If the individual agents aren't working consistently in isolation, orchestrating them won't fix the problems. It will hide them until they're expensive.

2. You have distributed tracing. Agent-level observability infrastructure must be in place before you go to production. Without it, you cannot diagnose failures. You can only observe that something went wrong somewhere and try to feel your way to the cause. That isn't engineering. That's a scene.

3. Failure ownership is assigned. For every output the system produces, a specific human's name is on it. Not "the AI team." Not "the platform." A person. Who is accountable? For outcomes.

4. You have a cost model. Built before deployment, not discovered after. Include orchestrator overhead, parallel subagent calls, retry logic, and context reload costs. Model the scenario where something retries 50 times. Because something will retry 50 times.

5. Your autonomy posture is defined. What runs without approval, what requires a flag, what requires a stop. Written down. Not assumed. Not "we'll figure it out when something breaks."

6. The workflow genuinely needs it. Does this workflow cross system boundaries or require genuine parallelism that a single agent with better tooling cannot handle? If neither of those is true, a single agent with better tooling probably handles it. Don't add complexity for its own sake. Complexity has a cost, and it gets paid in debugging hours at inconvenient times.

(Inconvenient times mean Friday at 6 PM. It always means Friday at 6 PM.)

The Real Truth

The promise of multi-agent orchestration is real. The parallelism is real. The specialization gains are real. The fault tolerance is real. The $35 billion market projection by 2030 is real.

So is the 17x error amplification when you build it wrong.

So is the 40% failure rate within six months.

So is the $50,000 monthly token bill that started as a $0.50 test.

The teams that will build reliable multi-agent systems in 2026 aren't the ones who move fastest. They're the ones who moved deliberately, starting with the simplest architecture that worked, adding complexity only where the evidence demanded it, and carrying the governance disciplines from their single-agent work forward into the coordination layer.

The machines aren't the hard part. They never were.

The hard part is the same thing it's always been: clear ownership, disciplined design, and someone in the room willing to say "we're not ready to deploy this yet."

That someone is usually outvoted.

Build the observability layer anyway.